The End of Software as We Know It

Late in 2025, I needed a way to process and categorize expense receipts for my company. The kind of task you would normally solve by purchasing accounting software. I spent two hours comparing options. None of them did exactly what I needed.

Then I described the problem to an AI coding agent and developed a local webapp to categorize these receipts, pre-fill the tax-relevant fields, flag anything unusual. An hour later, I had a working solution. Not a generic product bent to fit my case. A solution built for this specific moment.

After that, my Learn and Memory Agent improved the process. The next time I used it, the Expenses Agent had learned from my corrections. It pre-filled most fields correctly and generated a new interface where I only confirmed or adjusted. By the fourth time, I just said: do it. And it did.

That sequence: build me the tool, confirm what is right, learn for next time. It is not a product feature. It is a paradigm shift. But this is not even the end of the story. Why should I tell the AI agent to process the expenses at all? Why should the agent not actively remind me to submit my latest receipts, because it knows the bookkeeper has been waiting for the monthly report? At that point, the machine initiates. Not me. We are witnessing the end of software as we know it.

The Evolution of Software Interaction

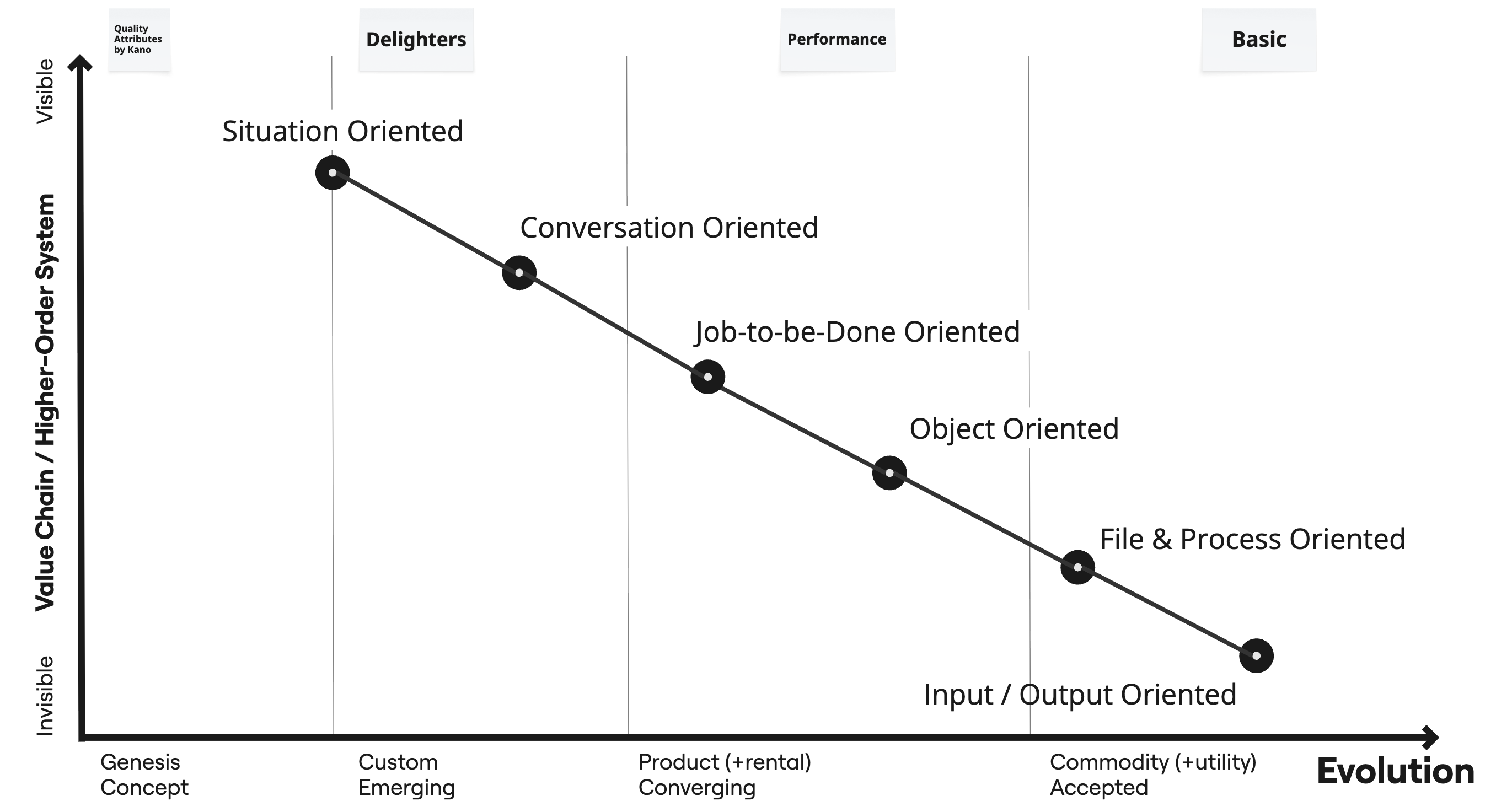

To understand what is happening, it helps to trace how humans have interacted with software across its entire history. Each stage shifts responsibility from human to machine, moving from concrete artifacts to abstract context. The progression follows distinct paradigms, each with its own mental model, its own interface style, and its own assumptions about who is in control.

| Stage | Paradigm | Machine Role | Human Role | Kano Model | Evolution |

|---|---|---|---|---|---|

| Input/Output Oriented | Command-centric | Executes instructions | Operator | Basic | Commodity |

| File & Process Oriented | Structure-centric | Passive tool | Operator | Basic | Commodity |

| Object Oriented | Entity-centric | Passive tool, flexible | Operator | Performance | Product (+Rental) |

| Job-to-be-Done Oriented | Task-centric | Reactive to clicks | Director | Performance | Product (+Rental) |

| Conversation Oriented | Intent-centric | Reactive to language | Director | Delighter | Custom-Built Emerging |

| Situation Oriented | Context-centric | Proactive | Governor | Delighter | Genesis |

Two columns deserve special attention. The Kano Model captures how users perceive each paradigm. What once excited customers eventually becomes expected. File management was a revelation in the 1980s. Today nobody is impressed by “File > Save As.” Conversational AI still delights in 2026, but in a few years users will simply expect it. Early paradigms have become Basic quality: taken for granted, noticed only when missing. Middle stages still offer Performance quality: the more, the better. The latest stages deliver Delighter quality: unexpected, differentiating, hard to compete against. A product stuck at Basic competes on price. One that delivers the next Delighter leads the market.

The Evolution column connects each paradigm to Wardley’s evolution stages. Situation Oriented is at Genesis: a new paradigm, enabled by the components below it that have matured into Product or Commodity. The six stages that follow tell this story.

Input/Output Oriented (1950s-1970s)

The user submits commands or data. The machine returns results. No persistence, no state, no visual feedback. The user must know the exact syntax. The machine does nothing without explicit instruction.

Punch cards, command-line terminals, mainframe batch processing, early UNIX shells. The user thinks in commands and parameters. Ted Nelson’s Computer Lib (1974) was already a manifesto against this “priesthood of computing,” envisioning computers as universal creative media rather than fixed command executors.

File & Process Oriented (1970s-1990s)

The computer provides rigid structure. Users create, save, and manage files. Processes guide users through predefined steps. The mental model shifts to “I have a document” or “I follow a transaction.”

IBM 3270 terminals running CICS transactions, WordPerfect, Lotus 1-2-3, SAP R/3 transaction codes. “File > Save As.” Named documents on disk. Menu-driven screens. The user thinks in files and forms.

Alan Kay and Adele Goldberg’s Dynabook vision (1977) already challenged this paradigm, imagining every user as a programmer with “symmetric authoring and consuming.” The market stayed in files and forms for two more decades.

Object Oriented (1990s, largely failed)

The user works with objects directly. A printer is an object, a document is an object. You do not “open an application.” You interact with the thing itself. Objects from different sources can be embedded in one another.

OS/2 Workplace Shell, OpenDoc, OLE and ActiveX, NeXTSTEP, Taligent. Drag-and-drop objects. Compound documents. Right-click context menus on entities. The user thinks in things, not applications.

This paradigm lost commercially. But its ambition, giving users direct control over composable entities, planted seeds that only now begin to grow. An ACM survey (Ko et al., 2011) found that more end-user programmers already existed than professional developers. The demand for users building their own tools was massive. The technology was not ready.

Job-to-be-Done Oriented (2007+)

“There’s an app for that.” One app per job. No files, no objects. I want a taxi. I want food. I want to track my run. The application disappears behind the task.

iPhone App Store, Uber, Spotify, Slack, Notion, single-purpose SaaS. App icons on a home screen. Single-purpose design. The user thinks in tasks, not tools. Onboarding asks “What do you want to do?” not “Here is your file system.”

Clayton Christensen’s Jobs to be Done framework captures the underlying principle: customers do not buy products. They hire them to make progress in a specific situation.

Conversation Oriented (2022+)

The user describes intent in natural language. The machine delivers. For the first time, ambiguity is allowed. You do not need to know which app or which button. But the machine remains passive: it waits for the human to speak.

ChatGPT, Claude, GitHub Copilot, Microsoft Copilot in Office, and the emerging field of Malleable Software, where users reshape their tools at runtime through natural language.

A text input field. Natural language instead of buttons. The user thinks in wishes, not workflows.

Conversation is the last stage of the old paradigm. The human still initiates. Just more comfortably.

Situation Oriented (Genesis)

The machine initiates. It recognizes the situation, generates the appropriate interface, learns from feedback, and eventually acts autonomously. There is no pre-built application.

“The most profound technologies are those that disappear.” Mark Weiser, The Computer for the 21st Century (1991)

OpenClaw, an autonomous AI agent with a Heartbeat system that initiates actions without human prompts. Claude Code, which generates tools on demand. AI agents that chain actions autonomously. Adaptive dashboards that reconfigure based on context.

No fixed UI. No app to install. The interface is generated for the moment and discarded after. The user thinks in outcomes, or does not need to think at all.

There are no applications anymore. Only just-in-time solutions.

The Software Inversion

Everything up to and including Conversation Oriented is human-driven. The human initiates, the machine responds. Situation Oriented is machine-driven. The machine acts, the human governs.

This is the fundamental inversion. Not a gradual shift, but a reversal of the basic relationship between human and machine. For the first time in the history of computing, the default mode is not “the human tells the machine what to do” but “the machine acts and the human sets boundaries.”

The human role evolves across three stages:

- Operator (Input/Output, File & Process, Object Oriented): The human executes. They know the commands, manage the files, manipulate the objects. The machine is a passive tool.

- Director (Job-to-be-Done, Conversation Oriented): The human delegates. They choose the app, describe the intent, direct the conversation. The machine reacts, but only when asked.

- Governor (Situation Oriented): The human sets constraints and reviews outcomes. The machine anticipates, proposes, and acts. The human adjusts boundaries, or simply trusts.

The question is no longer “What should the machine do?” It becomes “What should the machine not do?”

Three Steps to Autonomy

Situation Oriented computing does not arrive as a single leap. It unfolds in three sub-stages, each shifting more initiative from human to machine.

“Build me the interface.” The user states intent, the AI generates a solution. Still dialog-based, still explicit. But the application is not pre-built. It is created on demand, for this specific situation, and discarded afterward. My receipt tool started here: I described what I needed, and the agent built it.

“Confirm if this is correct.” The AI anticipates. It pre-fills, proposes, generates the interface before being asked. The human becomes a reviewer, not an initiator. When my agent learned my categorization patterns and presented its own suggestions for confirmation, it had reached this stage.

“Just do it.” Autonomous execution. The human delegates completely. The agent processes, categorizes, files, and only surfaces exceptions. The human role reduces to governance: defining what “correct” means, setting boundaries, reviewing outcomes periodically. David Tennenhouse called this “proactive computing” in 2000: the human leaves the interaction loop.

These three sub-stages coexist. Simple, well-understood tasks reach “just do it” quickly. Novel or high-stakes tasks may stay at “build me the interface” indefinitely. The appropriate level depends on the situation, not on the technology.

The business consequence is significant. Satya Nadella argues that “SaaS will collapse in the agent era.” Business applications are fundamentally databases with logic on top. When AI agents take over the logic layer, the traditional model of selling packaged application software loses its foundation. What remains is data and governance. Not software.

Haven’t We Heard This Before?

Yes. In 1977, Alan Kay and Adele Goldberg envisioned the Dynabook: a personal computer where every user is a programmer. In 1993, Bonnie Nardi documented in A Small Matter of Programming how end users were already building their own tools with spreadsheets and macros, far beyond what software vendors intended. Object Oriented computing promised composable, user-controlled environments. Every decade has had its vision of the empowered end user.

The difference this time: the underlying components have evolved. In the 1990s, the enabling technologies for situation-oriented computing (natural language processing, on-demand compute, machine learning) were in Genesis. Unreliable, expensive, accessible only to specialists. Today, large language models are products approaching Commodity. Cloud compute is Commodity. The layer above them can now emerge because the layer below has matured. This is Evolution Focus in action: each evolutionary stage enables the one above it.

But the challenges are real. When every user generates their own just-in-time tools, governance becomes extraordinarily difficult. Who audits software that exists for five minutes? Who ensures compliance when the interface is generated fresh each time? For regulated environments, this is a genuine problem.

These are not objections that invalidate the paradigm. They are characteristics of Genesis. Every technology in Genesis is unstructured, hard to manage, not yet standardized. The printing press created an explosion of uncontrolled information before censorship and copyright emerged. The internet created an explosion of uncontrolled communication before regulatory frameworks caught up. Situation-oriented software will create an explosion of uncontrolled micro-applications. The governance structures will follow. But they are not here yet.

What This Means for the Enterprise

Software interaction evolves from human-operated to machine-initiated. The human role shifts from Operator through Director to Governor. And Situation Oriented computing is demanding attention.

For enterprises, the consequence is concrete: rethink your product portfolio and reorganize how you work.

Your products and services sit somewhere on this evolution. Some are still File & Process Oriented. Others have reached Conversation Oriented. A few may already be experimenting with Situation Oriented capabilities. Not all will evolve at the same pace. But the direction is clear, and falling behind means competing on price in a market where competitors deliver Delighters.

The way your organization works must change with it. When the machine initiates and the human governs, you need less operational overhead and more governance capability. Teams shift from operating tools to governing AI agents that operate on their behalf. Leadership shifts from directing specific solutions to defining the constraints within which AI-generated solutions must operate. The AI-enhanced team is the organizational expression of this inversion.

Map where each of your products sits on the evolution path. Identify the next step for each. Reorganize to match. This is Evolution Focus in practice (see Developing a Strategy for the GenAI Era).

The end of software as we know it is not the end of the need for structure. It is the beginning of a new kind of structure: one designed for governing intelligence rather than operating tools.